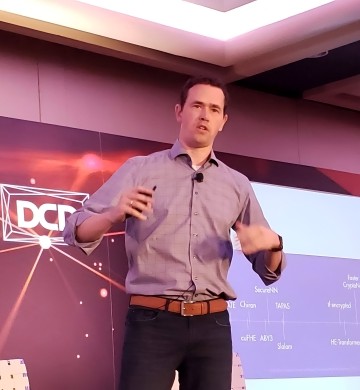

I'm Jan - Fractional Leader

Proven Experience. On-Demand Expertise.

Smarter, Faster Growth.

Executive Expertise, On Your Terms

Start-up & Scale-up's desperately need senior leadership but often lack the budget for a full-time hire. I bridge this gap by offering C-level expertise in a flexible and cost-effective way.

I support Private Equity and VC firms during the mutual due diligence process, leveraging my deep operational and technical experience. (Currently engaged exclusively with Fortino Capital PE).

Where Strategy Meets Execution

A winning strategy is only as good as its execution. My unique background, spanning from hands-on engineering to C-level business leadership, allows me to bridge the crucial gap between your vision and technical reality. I ensure your product, technology, and business strategies are not just aligned, but fully integrated for maximum impact.

My name is Jan, I am a versatile technology executive, investor, and entrepreneur with a proven track record of driving success in the B2B SaaS industry. For over 15 years, I have co-founded and scaled multiple software companies, leading them from concept to successful exits through private equity or strategic acquisition. My experience is a unique blend of C-level leadership—having served as a CEO, COO, and CTO—and deep technical expertise in Cloud, Machine Learning, and Edge computing. I offer current, real-world insights to help you navigate the evolving tech landscape and achieve your goals.

I have hands-on experience growing products from concept to $80M in revenue and have successfully executed M&A transactions and fundraising initiatives.

My ability to lead as a CEO, COO, CPO, and CTO allows me to guide both overall business strategy and complex product development with a unified vision.

Rooted in my background as an engineer, architect, and CTO, I have a deep technical understanding of Cloud, BigData, Machine Learning, and Edge computing, allowing me to connect their capabilities to clear business benefits and the creation of truly impactful products.

I had the pleasure of working with Jan at Spoken Communications, prior to our acquisition by Avaya. By definition, he exemplifies the "bulls eye" where technical depth and strategy, an ability to understand and relate to people, and organizational strategy, alignment and process, all meet up. Always the voice of reason in the room. Integrity, Intelligence, Action and a make it happen senior executive.

Brian Sliverman

Founder Five9, Ex-Amazon VP

Oh where to start about Jan. I had the pleasure of working under Jan while I was at Spoken and later at Avaya. Jan is a fantastic leader that knows how a cloud business should operate. Jan is sharp, dedicated, passionate about the work, and most importantly, really cares about the people. He is someone I trust and respect. I wouldn't hesitate at all for the chance to work with Jan again on future endeavors. I hope our paths are more like a slight deviation then different directions.

John Shao

CTO Pipe17, ex-Oracle, ex-VMWare

Jan is the most exceptional leader in cloud computing. He and I worked together as peers for several years at SDL. During that time, I had first-hand experience with Jan's unique combination of visionary leadership and pragmatic execution. He drove multiple initiatives to move our offerings from a mix of private cloud SaaS and om-premise software to public cloud (primarily in AWS). In those projects, Jan demonstrated both strategic vision and practical execution.

Daryl Orts

CTO / CPO

I met Jan in September of 2008 when we first started Data Center Pulse. At that time he was working for the Dutch Police Force running their infrastructure. I was quickly impressed with his capabilities and drive and asked him to represent DCP in Europe. Jan is a full stack guy who understands the technology, the business, and the market. He's also someone I respect and trust.

Dean Nelson

ex-Uber VP, ex eBay VP

— Companies

Looking for collaboration?

Schrikslaan 74, Soest, the Netherlands

300 Lenora St, Seattle WA, USA